Software

Touch Designer is a visual development software for creating realtime projects with rich user experiences. It would allow me to control devices and media simultaneously:

- Connect a live camera to a video projector for motion detection.

- Based on the feedback from the camera, the projector plays the programmed interactive visual media on areas of my art installation.

Equipment

- Computer for running Touch Designer (ie: laptop or Raspberry Pi)

- Video projector

- Live camera

- Light

- Art installation

- Miscellaneous components (extension cords for example)

How It Works

When viewers approach the art installation, the camera above it detects motion and sends this to the computer running Touch Designer.

Touch Designer generates the programmed interactive visual media based on this feedback and sends it to the video projector.

The video projector plays the results within the mapped areas on the art installation, which is also controlled by Touch Designer.

Some of the blocks show a live feed of the viewers themselves, while other blocks get assigned images or text. Those are generated by Touch Designer, running through filters that modify the visual media (turbulent distortion or changing colors for example).

The idea is to get the installation to respond to people using motion sensors and light.

Equipment

Initially, I wanted to use Kinect. It uses infrared light to detect motion and is a lot more accurate than a regular camera. However, the accuracy decreases when there are more people in front of the installation.

Regular cameras are not as sensitive and relies on light gradations to process images, and I could use this to identify the distance of viewers from the installation. The camera turned out to be the best choice for large groups of people. So because of how cameras work, lightning needed to be added to make viewers visible to the camera.

I set up a light at the back of the installation on the floor, to shine onto viewers to assist the camera. The farther they are from light, their bodies become darker than people who are standing closer to the installation.

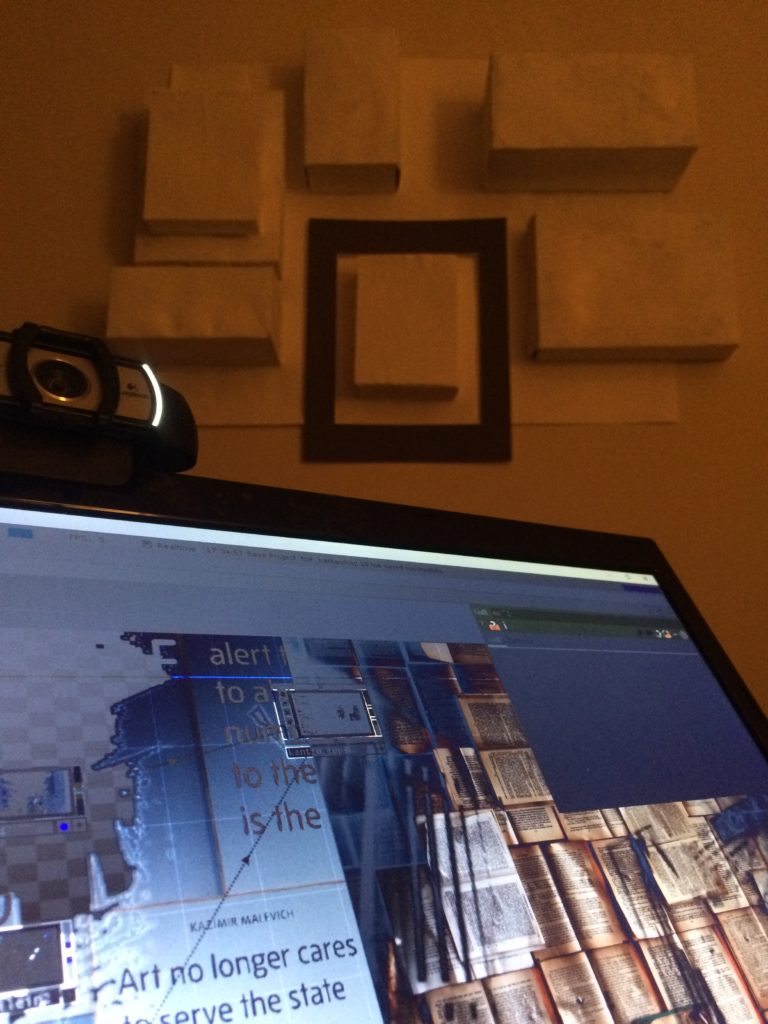

Image gallery below: From left to right, experimental version of colours and images being projected onto the surfaces of white 3D rectangles on the wall

Process

I started by building a small version at home, using a web camera and Touch Designer on my laptop. It took me a few weeks to get all these components to work together and learning how to map video projections onto surfaces.

Once I had the tech set up, I turned my attention to stylizing the video blocks with bits of text and images making decisions how I wanted them to respond to viewers.

When someone is too far from the installation, some blocks changes to a solid colour. When they come closer to it, the blocks show live video feeds of the viewers or visual media.

Interpretation

The idea behind the interaction is the human experience of searching for information. When humans cannot hear something well, we walk closer to the source to hear better. This is also true for Deaf people, when we can’t see something as well, we need to be closer to it to process information. For both hearing and Deaf people, the further away we are from the source, the less information we get from our senses. In Deaf culture, we often describe ourselves as moths drawn to the light.

When the blocks change to solid colours, it represents the barrier we experience when we can’t get the information. It forces us to come closer in order to overcome this barrier, and this is a big part of our lives when we have hearing loss.

In my case, I need to sit in the front to see the interpreter in classes or for meetings. Mentally, I’m constantly strategizing ways to get information I need and that can sometimes mean closer contact with people.

For example, I need to talk to the owner because the cashier doesn’t understand I need paper and pen to write down my order or I need to let content creators to caption their videos. I always need an interpreter when I’m around people who don’t know sign language and without one I can’t participate in conversations. These things are uncompromising, constant barriers in my life that occur and in many ways they become physical barriers when I don’t have access.

Final Installation

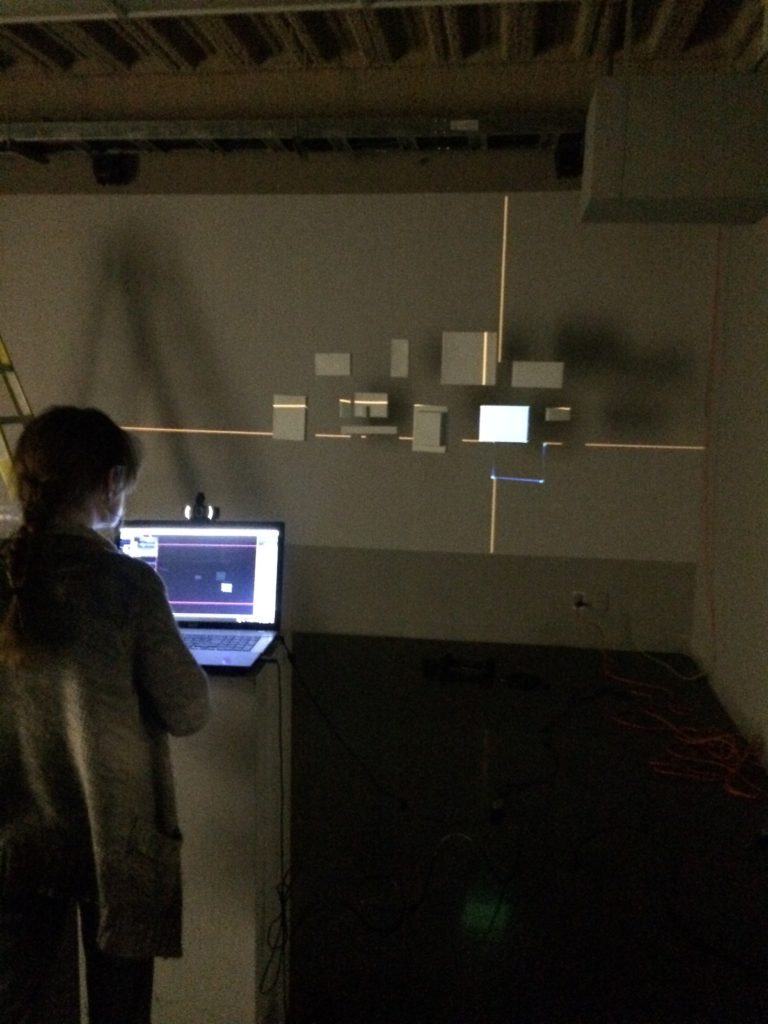

I decided it would be more interesting if I suspended all the blocks using transparent fishing lines from the ceiling. So I did that and set up the camera above facing outwards to viewers to feed video to the computer in the ceiling. The computer runs the Touch Designer file I made to create these interactive video blocks in my installation.

Image gallery: Arranging the boxes and then hanging them up using transparent fishing lines before mapping using Touch Designer

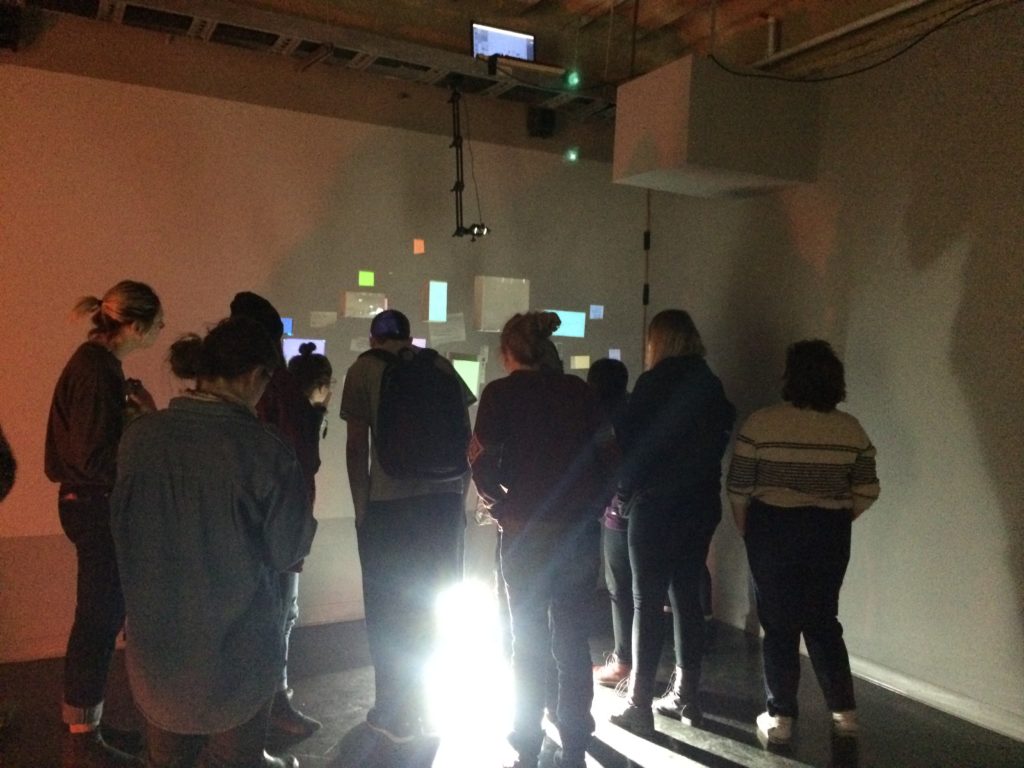

People behaved exactly as I thought they would, straining to find information within the text and images on the blocks. When they walk away from the installation, the blocks change to solid colors and they can no longer process what’s on those blocks. So they have to walk closer to make the blocks change back and see what’s on them.

This becomes a metaphor for the unhearing body, in which it must find ways to close those gaps in information through physical means (ie: moving closer to see/hear better).

Image gallery: View of people interacting with my installation